Neural Thermodynamic Laws for Large Language Model Training

Voice is AI-generated

Connected to paperThis paper is a preprint and has not been certified by peer review

Neural Thermodynamic Laws for Large Language Model Training

Ziming Liu, Yizhou Liu, Jeff Gore, Max Tegmark

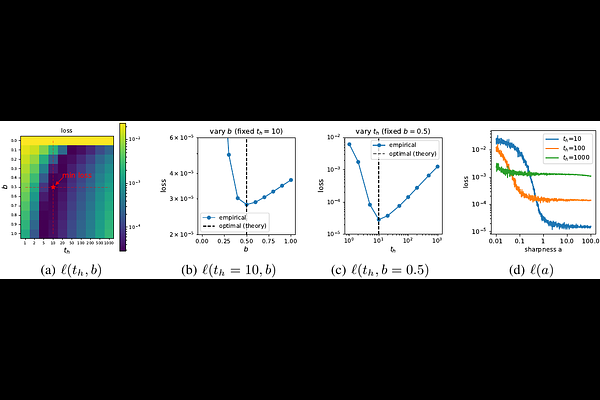

AbstractBeyond neural scaling laws, little is known about the laws underlying large language models (LLMs). We introduce Neural Thermodynamic Laws (NTL) -- a new framework that offers fresh insights into LLM training dynamics. On the theoretical side, we demonstrate that key thermodynamic quantities (e.g., temperature, entropy, heat capacity, thermal conduction) and classical thermodynamic principles (e.g., the three laws of thermodynamics and the equipartition theorem) naturally emerge under river-valley loss landscape assumptions. On the practical side, this scientific perspective yields intuitive guidelines for designing learning rate schedules.