Bridging the neural synchronization to linguistic structures and natural speech comprehension

Bridging the neural synchronization to linguistic structures and natural speech comprehension

Martorell, J.; Di Liberto, G.; Molinaro, N.; Meyer, L.

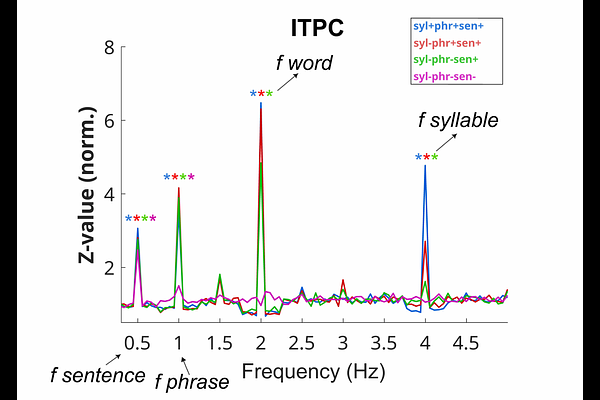

AbstractSpeech comprehension involves the inference of abstract information from continuous acoustic signals. Prior work suggests that electrophysiological activity is synchronized with abstract linguistic structures (phrases and sentences) during the processing of isochronous syllable sequences. It is yet unclear whether this prior evidence generalizes to natural speech comprehension, which requires the flexible processing of continuous speech, where syllables and other types of linguistic units are anisochronous. Our magnetoencephalography experiment investigated neural synchronization to acoustic (syllables) and abstract units (phrases and sentences) using continuous speech ranging from artificial isochronous to more natural anisochronous. We find that neural synchronization to phrases and sentences, but not syllables, is resilient to naturalistic anisochrony. This suggests that linguistic structure processing reflects endogenous inferences that are fundamentally distinct from the exogenous processing of syllables driven by speech acoustics. Lateralization and linear regression results extend this functional dissociation as hemispheric asymmetry: stimulus-independent leftward lateralization for linguistic structure processing but stimulus-driven rightward lateralization (or bilaterality) for both syllable and acoustic processing. Our findings provide a more realistic characterization of the flexible neural mechanisms supporting the efficient comprehension of natural speech.