Adaptive integration of model-based and model-free strategies in human reinforcement learning of reachable space

Adaptive integration of model-based and model-free strategies in human reinforcement learning of reachable space

Zhu, T.; Syan, R.; Vejandla, S.; Gallivan, J. P.; Wolpert, D. M.; Flanagan, J. R.

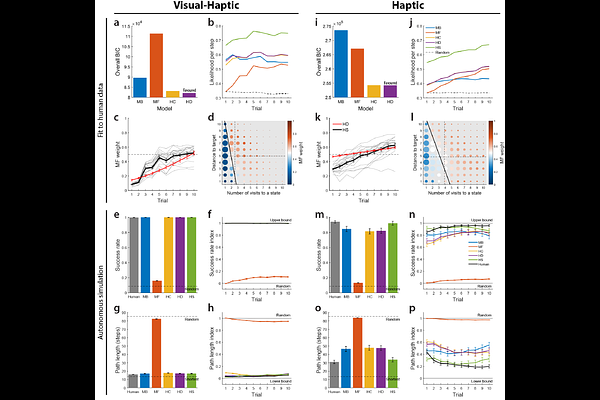

AbstractMost skilled behaviour occurs within reachable space; however, how humans learn to reach around obstacles in this space remains almost entirely unexplored. Here, using a novel robotic maze task that captures the richness of naturalistic hand-object interaction, we show that humans adaptively integrate model-based and model-free reinforcement learning strategies to act in reachable space. Fitting hybrid models to reach trajectories revealed that participants shifted from model-based toward model-free strategies across learning. Specifically, model-free reliance increased with state familiarity and distance from the goal, and with exclusive haptic feedback. Across participants, greater model-free reliance was associated with faster movements, consistent with reduced planning demands. Critically, direct comparison with an analogous virtual navigation task revealed stronger model-free reliance in reachable space than in navigable space, demonstrating that the computational architecture governing spatial learning is shared across scales but calibrated to the costs and constraints of the specific effector system.